This is part two of my blog series on how to improve clustering with deep learning techniques. In the previous part, I explained how clustering works and why it does not perform well with large and complex datasets. In this part, I will describe how deep learning techniques can be used to perform clustering and how I aim to further improve it.

Using Deep Learning to Create Better Features

As mentioned in part one, each pixel of an image that we want to cluster represents a dimension or feature. Fortunately, we are not interested in every single pixel and, therefore, we can reduce the amount of features (i.e. relevant pixels), which will help us to cluster better. One way to know and extract the most important features is by using deep learning. Nowadays, there are many different deep learning methods that can be used to create those new feature sets for clustering. Among one of the most commonly used methods is the autoencoder.

As illustrated in figure 4, an autoencoder consists of three parts: an encoder (shown in green), a bottleneck part (red) and a decoder (blue). The encoder learns to ‘map’ the input (e.g. an image) into a lower-dimensional representation by extracting the most important information of the input using non-linear transformations. These lower-dimensional and abstract features are then stored in the bottleneck layer. Next, the decoder takes this set of features from the bottleneck part and learns to reconstruct the original input. To ensure that the autoencoder learns to reconstruct the input well, its performance is judged by how similar the original and reconstructed data is.

To use this network for clustering, we just have to train the autoencoder with the data that we want to use for it. Once we achieve a good reconstruction quality, we are done training and can use the autoencoder for clustering. To do this, we run all the data through the encoder and receive the lower-dimensional representation of the data that is saved in the bottleneck part (called ‘latent representation’). This latent representation can now be used as an input for the clustering methods.

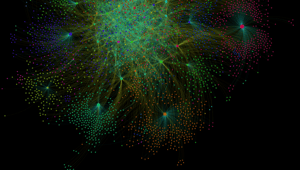

Since we use the latent representations of the data as input for our clustering methods, it becomes apparent that learning a better representation of the features in that bottleneck part (called ‘latent space’) will also affect the clustering performance. Because of this direct relationship, many researchers who work on improving clustering methods started developing deep learning methods that can learn more cluster-friendly latent representations of the input [2, 3]. While many methods have been proposed over the years, there was no known tool to directly influence how cluster-friendly the latent representations would become. Recently, however, a team from Google Brain has released a method to do just that. They call it ‘Adversarially Constrained autoencoder Interpolation’ [1] (yes, researchers love complicated sounding terms) and that’s what we’ll go into next.

Adversarially Constrained Autoencoder Interpolation (ACAI)

The overall structure of the proposed method entails two parts: a normal autoencoder and a critic. The idea behind the critic is that it helps the autoencoder to create a latent space where semantically meaningful features (e.g. all 0’s of the handwritten digits) are naturally grouped in one area of the latent space. To achieve this, the autoencoder takes two images and mixes them in the latent space (see figure 6), and decodes this mixed representation. The critic then learns to guess to what degree this image was ‘mixed’ and gives this information back to the autoencoder as negative feedback. This negative feedback encourages the encoder to do two things: firstly, it is forced to adapt its latent space in a way that a mixed representation becomes easier to decode into a realistic looking image. Secondly, the decoder learns to better decode these mixed representations (while also being able to decode non-mixed latent representations). However, the detailed processes of exactly how the critic’s behaviour affects the autoencoder are currently unclear and subject to research.

More formally, the process can be described as follows (and as illustrated in figure 6). The encoder, bottleneck part and decoder are the same as in a normal autoencoder. However, here the encoder and bottleneck part are shown twice because this approach takes two data points (e.g. two images) and encodes both data points after each other, resulting in the latent representations z2 and z3 for the shown digits 2 and 3, respectively. Mathematically, the bottleneck part is a vector with a certain length d (for dimensionality), which means that you can do arithmetic calculations with it. Thus, to do the above-mentioned ‘mixing’ of the two representations, the autoencoder picks a random value alpha that has to range between 0 and 0.5. It will then mix the latent representations of both images, by using the following equation:

mixed representation = (latent representation1* alpha) + (latent representation2 * (1-alpha))

This operation, called interpolation, results in a latent representation that incorporates the latent vectors of both images to certain degrees. This mixed representation is then sent to the decoder, which tries to create a reconstruction image that, ideally, looks exactly like the first input image.

After the autoencoder decoded the mixed representation, this reconstructed image is sent to a second network, called the critic. This critic is structured like the encoder, which means that it learns to create a latent representation of the reconstructed image. Then, it uses this latent representation to “guess” the alpha value that the autoencoder used to mix the two images.

Figure 5. Source [1].

The performance of the critic (i.e. how close its prediction was to the real alpha value) is then sent to the autoencoder as feedback. The better the critic’s performance, the higher the ‘penalty’ for the autoencoder, which means that it did not perform well.

The goal of both networks (autoencoder and critic) is to achieve a good performance, however, these two goals are mutually exclusive. Every time the critic guesses the alpha value correctly, the autoencoder receives a penalty, thereby automatically lowering its performance score. At the same time, every time the autoencoder creates a realistic looking image out of the mixed representation, the critic will not be able to guess the correct alpha value, lowering the critic’s performance score. This back and forth between the networks creates a ‘battle’ between the two. Eventually, it is expected that the autoencoder wins this battle [1]. It appears that the autoencoder manages to create a latent space that is semantically meaningful and, as suggested by the paper, this might be because of the size of the network as well as the added critic. Even more importantly, the authors suggest that the autoencoder learns to encode the images in such a way that semantically similar points (e.g. all 0’s) are clustered together in the latent space [1]. This resulting latent space, in turn, will help the decoder create realistic looking images and the critic will not be able to notice that the reconstructed image was based on a mixed representation.

Since all semantically similar points are now close to each other in the lower-dimensional latent space, giving this as an input for clustering methods may largely improve clustering performance. To evaluate if this is true, the cluster accuracy can be calculated once the clustering algorithm (e.g. k-means) created the clusters. This is done by using the ground-truth (i.e. the ‘true’ class a data point belongs to) of a data point and comparing it to the assigned cluster. If the autoencoder learned to extract the features well, then the latent representation of a data point will be a good representation of the ‘true’ class it belongs to. Assuming that latent representations of the same class should be semantically more similar than latent representations of different classes, pushing these semantically similar points together during the proposed training method should, therefore, improve clustering accuracy.

This improvement, in turn, could have large practical implications for all areas where clustering is used, such as predictive maintenance, customer segmentation and anomaly detection. To evaluate the performance, the semantically similar

So far, this is the only paper that uses this method of a critic to improve the model’s latent space. In the paper, they focus on introducing the general method and compare it to other types of autoencoders. They demonstrate that just adding this critic to a ‘normal’ autoencoder can lead to state-of-the-art clustering performance on image datasets.

Improving Deep Clustering via Adversarially Constrained Interpolation

While the above-mentioned method has been tested on a ‘standard’ autoencoder, there are many questions that remain to be answered about this technique. For example, will it always lead to a better clustering performance or only under certain conditions? And does it work with all types of autoencoders (and there are a lot)? As part of my master’s project, I will investigate these questions by combining this technique with current state-of-the-art autoencoder methods that have been specifically designed for clustering. Last but not least, I will try to open the ‘black box’ of this approach by investigating the changes that occur in the latent space and making these changes more transparent.

Now that we know how autoencoders work and how the proposed method aims to improve them, I will continue in part three of this series by describing the results I obtained in investigating the above mentioned questions.

--------------

[1] Berthelot, D., Raffel, C., Roy, A., & Goodfellow, I. (2018). Understanding and improving interpolation in autoencoders via an adversarial regularizer. arXiv preprint arXiv:1807.07543.

[2] Guo, X., Gao, L., Liu, X., & Yin, J. (2017, June). Improved Deep Embedded Clustering with Local Structure Preservation. In IJCAI (pp. 1753-1759).

[3] Min, E., Guo, X., Liu, Q., Zhang, G., Cui, J., & Long, J. (2018). A survey of clustering with deep learning: From the perspective of network architecture. IEEE Access, 6, 39501-39514.